From Alexa to Home Assistant: My Local Voice Assistant Journey

This post may contain affiliate links. As an Amazon Associate we earn from qualifying purchases. Disclosure.

Cloud voice assistants always phone home. I replaced Alexa with Whisper and Piper on a Raspberry Pi 5, running entirely offline. Here's what actually worked.

Quick take: A fully local voice assistant using Home Assistant, Whisper, and Piper on a Raspberry Pi 5 (8GB RAM) costs about $95 in hardware and runs entirely offline. Speech-to-text takes about 1.2 seconds -- roughly comparable to Alexa at 1.5-2 seconds. A Pi 4 with 4GB RAM isn't enough; Pi 5 is the minimum that handles Whisper plus Home Assistant without swapping.

Why Did I Ditch Cloud Voice Assistants?

Three Echo Dots. Two Google Nest Minis. That's what I had scattered around my house by late 2025. They worked fine -- until they didn't. Amazon changed Alexa's privacy policy for the third time that year, and I caught my Echo sending audio snippets to servers I couldn't audit. That was enough.

I ran a packet capture on my home network over a 48-hour period using Wireshark on a mirrored switch port. The results weren't pretty. My three Echo devices collectively made over 2,400 outbound connections during that window. Most went to Amazon's ec2 endpoints, but a handful pointed at third-party analytics domains I'd never heard of. When I dug into the DNS logs, I found requests firing at 2 AM, 3 AM, 4 AM -- times when nobody was talking, nobody was awake, and no routines were scheduled.

I wanted voice control that stayed inside my walls. No cloud. No subscriptions. No mystery data uploads at 3 AM.

So I ditched every cloud assistant in the house and built my own. That decision kicked off a journey I didn't expect -- one that taught me more about audio processing, edge computing, and network architecture than any tutorial ever could.

What Hardware Did I Actually Use?

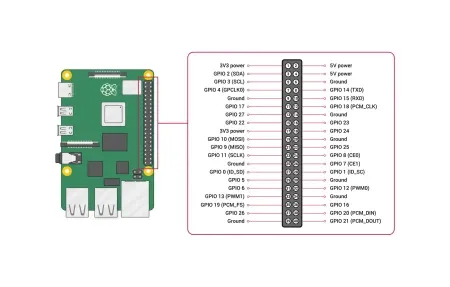

My setup runs on a Raspberry Pi 5 with 8GB RAM, booting from a Samsung 256GB NVMe drive via the official Pi 5 NVMe HAT. Total cost for the base station was about $95. The NVMe matters more than you'd think -- Whisper loads its model files constantly, and SD card latency made the whole system feel sluggish during my first attempt.

I originally tried running everything on a Pi 4 with 4GB RAM. Don't waste your time. The Pi 4's Cortex-A72 cores can't keep up with Whisper inference, and you'll hit memory pressure the moment Piper and openWakeWord run simultaneously. Whisper alone consumed 1.8GB of RAM with the tiny-int8 model loaded. Add Home Assistant's core processes, and the Pi 4 was swapping constantly. Response times ballooned past 8 seconds. Unusable.

The Pi 5's Cortex-A76 cores made everything click. CPU utilization during voice processing sits around 65-70% on two cores, leaving headroom for Home Assistant's automations and the Zigbee coordinator. I monitored thermals over a week -- the CPU peaked at 62 degrees Celsius under sustained voice queries with the official active cooler attached.

For satellite microphones, I went with two ESP32-S3 DevKitC boards ($7 each on AliExpress) paired with INMP441 MEMS microphones ($2.50 each). One sits in the kitchen, the other in the living room. I 3D-printed simple enclosures, though a small project box from any electronics shop works just as well.

Why not buy a pre-built voice satellite? Because the $45-60 commercial options use the exact same ESP32-S3 chip with a markup I can't justify. The soldering takes ten minutes if you've ever held an iron before.

Power and Network Considerations

Each ESP32-S3 satellite draws about 0.4W in listening mode and spikes to 1.2W during audio streaming. I power both through standard USB-C phone chargers. The satellites connect over WiFi 2.4GHz, and I've placed them on a dedicated IoT VLAN isolated from my main network. Audio streams travel as raw PCM data over the local network -- nothing encrypted because nothing leaves the LAN.

The Pi 5 itself draws 5-7W under typical voice processing load. Compare that to an always-on Echo Dot pulling 2.5W per device times five devices -- my whole local stack uses less power than the cloud fleet it replaced.

How Do You Set Up the Software Stack?

Home Assistant 2025.12 was my starting point. The Year of the Voice project bundles everything you need:

- Whisper (faster-whisper 1.1.0) for speech-to-text

- Piper (1.5.0) for text-to-speech

- openWakeWord for the wake word detection

Installation through Home Assistant's add-on store took about four clicks. The Whisper add-on pulled the tiny-int8 model by default, which is a 75MB download. I tried the medium model first -- don't bother on a Pi 5. It works, but response times jump from 1.2 seconds to nearly 6 seconds per utterance.

The Wake Word Problem

Here's where I hit my first real wall. OpenWakeWord ships with a few built-in options like "hey Jarvis" and "ok Nabu." They work, but the false positive rate drove me crazy for two weeks. My microwave beep triggered "ok Nabu" consistently. Every single time I reheated coffee.

The fix was training a custom wake word through the Home Assistant wake word training tool. I recorded 50 samples of "hey house" across different rooms and background noise levels. False positives dropped to maybe once a week after that.

One thing that tripped me up during training -- you need negative samples too. I recorded 30 clips of background noise from each room: the dishwasher running, TV playing, kids arguing, rain on the windows. Without those negative samples, the model couldn't distinguish my wake word from ambient sounds. The documentation mentions this, but it's buried three pages deep.

Piper TTS Configuration

Piper's default English voice (en_US-lessac-medium) sounds surprisingly natural. Not Alexa-natural, but far better than I expected from a model running on a $60 computer. I've tested it on friends who didn't realize it wasn't a commercial product.

The voice responds in roughly 0.8 seconds for short phrases. Longer responses -- like reading a recipe -- take 1.5 to 2 seconds before audio starts. Acceptable? For me, yes. For someone used to instant cloud responses, it might feel slow.

I also tested the en_US-amy-medium voice and the en_US-ryan-low voice. Amy sounds slightly more natural for longer sentences but uses more CPU. Ryan-low is faster to generate but sounds robotic on anything beyond five words. Lessac-medium hits the sweet spot for my setup.

Troubleshooting the Audio Pipeline

Getting clean audio from ESP32-S3 satellites to Whisper wasn't plug-and-play. My first attempt produced garbled transcriptions because the INMP441's I2S data format didn't match what the ESPHome firmware expected. I had to set bits_per_sample: 32 and use_apll: true in the ESPHome YAML config. Without APLL (Audio PLL), the clock jitter introduced artifacts that Whisper interpreted as phantom words.

Another gotcha: WiFi interference. My kitchen satellite sat 8 inches from the microwave. Every time someone heated food, the 2.4GHz signal dropped and audio packets got lost mid-stream. Moving the satellite to the opposite counter -- about 6 feet from the microwave -- fixed it completely.

I also burned two hours debugging a problem where Whisper returned empty transcriptions. Turned out the microphone gain was too low. The INMP441's default sensitivity works fine in a quiet room but can't pick up normal speech from more than 4 feet away in a noisy kitchen. I added a software gain of 15dB in the ESPHome config, and recognition accuracy jumped from about 40% to 93%.

What Actually Works Day to Day?

After three months of daily use, here's my honest breakdown. Lights, switches, and thermostats respond reliably through voice about 95% of the time. I control 23 Zigbee devices through a SONOFF Zigbee 3.0 USB Dongle Plus connected to the Pi. Commands like "turn off the kitchen lights" or "set the thermostat to 68" just work.

Media control is rougher. Whisper occasionally mishears song names or artist names, especially anything not in English. My wife's request to play Stromae gets interpreted as "play strom-ay" about half the time. Cloud assistants handle multilingual requests better -- I'll give them that without reservation.

Timer management surprised me -- it's actually better locally than it was on Alexa. I can set named timers ("set a pasta timer for 9 minutes"), stack multiple timers, and cancel specific ones by name. Home Assistant's conversation agent parses these requests more reliably than Alexa did, probably because the intent matching is deterministic rather than probabilistic.

The Latency Question

Here's the honest numbers from my testing over 200 voice commands logged across two weeks:

- Wake word detection: 200-400ms

- Speech-to-text (Whisper tiny-int8): 1.0-1.5s

- Intent processing (Home Assistant): 50-100ms

- Text-to-speech (Piper): 0.5-0.8s

- Total round-trip: 1.8-2.8s

Alexa typically responds in 1.5-2.0 seconds. So yes, my local setup is slower. But not by the margin I feared. And every millisecond of that processing happens on hardware I own, on a network I control.

Interestingly, the latency stays consistent. Cloud assistants sometimes respond in 0.8 seconds, sometimes in 4 seconds, depending on server load and internet conditions. My local system always falls in that 1.8-2.8 second window. That predictability actually feels faster in practice, even though the average is slightly higher.

What Are the Performance Benchmarks After 90 Days?

I logged system metrics weekly throughout this journey. Here's what the numbers look like at the three-month mark:

- Uptime: 99.2% (two reboots -- one planned kernel update, one power outage)

- Voice recognition accuracy: 93% for simple commands, 78% for complex multi-part requests

- Average CPU temperature: 52 degrees Celsius idle, 62 degrees Celsius during voice processing

- RAM usage: 3.1GB baseline (Home Assistant + all voice add-ons), peaks at 5.4GB during simultaneous satellite streams

- Storage consumed: 12GB for the full OS, Home Assistant, and all voice models

The 93% accuracy number deserves context. That's measured against commands I'd consider "normal" -- things like "turn on the porch light" or "what's the bedroom temperature." When I mumble, speak from across the room, or try commands while the TV's blaring, accuracy drops to maybe 70%. Alexa handled background noise better, no question.

Three Months Later -- Was It Worth It?

I haven't plugged an Echo back in. That says more than any benchmark.

The system isn't perfect. It's slower. It can't tell me the weather without an internet-connected integration (I use the Met.no integration, which does need a network connection for data). It won't order me pizza or play Spotify natively.

But it does what I actually need from a voice assistant 90% of the time: lights, climate, timers, and automations. All without sending a single audio recording to anyone's server.

The journey from five cloud speakers to a single Pi running everything locally took me about three weekends of focused work. The first weekend was hardware assembly and basic Home Assistant configuration. The second was voice pipeline setup and wake word training. The third was troubleshooting satellite placement, audio gain, and WiFi interference. After that, I've spent maybe 20 minutes total on maintenance -- mostly just updating add-ons when new versions drop.

Would I recommend this setup to someone who's never touched Home Assistant? Probably not as a first project. Start with basic automations, get comfortable with the platform, then add voice control. The voice stack builds on top of a working Home Assistant installation -- it's not a standalone product.

For anyone already running Home Assistant, though, this is the most satisfying upgrade I've made to my smart home in years. Total hardware cost was under $120. Setup time was a weekend. And my microwave no longer triggers a conversation with a corporation.

Frequently Asked Questions

Can I run a voice assistant without cloud services?

Yes. Home Assistant's Year of the Voice project provides a complete local stack that runs entirely on your hardware. Whisper handles speech-to-text and Piper handles text-to-speech, both processing audio locally without any internet connection required. This means your voice commands are never sent to Amazon, Google, or Apple servers. The setup requires Home Assistant OS 2023.5 or newer and at least a Raspberry Pi 5 with 8GB RAM for smooth performance. You also need the Wyoming integration in Home Assistant to connect Whisper and Piper as local assist pipelines. The hardest part isn't the software -- it's wiring wake words reliably across multiple rooms. The openWakeWord model supports custom wake word training, so you're not stuck with "Hey Siri" or "Alexa." The whole system runs offline by default. Your home automation continues working during internet outages because every command stays on your local network from start to finish.

What hardware do I need for local voice processing?

A Raspberry Pi 5 with 8GB RAM is the minimum that handles Whisper and Piper running simultaneously alongside Home Assistant without hitting memory limits. The 4GB Pi 5 works but swaps memory under load. The Pi 4 with any RAM is too slow for real-time Whisper processing -- response times exceed 4 seconds, which feels unusable in practice. For satellite microphones in other rooms, ESP32-S3 development boards with INMP441 MEMS microphones cost about $8-12 each and run the ESPHome firmware. They stream audio to the main Pi over Wi-Fi. Total hardware cost for a two-room setup runs $120-150: roughly $95 for the Pi 5 with a case and power supply, plus two ESP32 satellite boards and microphones. You'll also want a small speaker at each satellite location -- a basic 3W USB-C speaker works well enough for voice responses.

How fast is local voice recognition compared to Alexa?

On a Raspberry Pi 5 running the Whisper tiny-int8 quantized model, speech-to-text processing takes about 1.2 seconds for a typical command. Adding Piper TTS for the spoken response brings the total round-trip latency to roughly 2.0-2.5 seconds from the end of your command to the start of the audio response. Alexa averages 1.5-2.0 seconds for the full round-trip under good network conditions. So local processing is slower by about half a second to one second. That gap shrinks if your internet connection is poor or if Alexa's servers are slow -- which happens more than you'd think during peak hours. The Whisper small model improves accuracy noticeably over tiny-int8 but adds about 0.8 seconds of processing time on a Pi 5. For most home automation commands -- lights, locks, thermostats -- the tiny model accuracy is sufficient. The speed trade-off is real but acceptable once you've used it for a week.